Small Language Models (SLMs): The Smart Shift Beyond LLMs

As AI adoption accelerates, enterprises are realizing that bigger is not always better. While Large Language Models (LLMs) have captured global attention, a quieter but more practical revolution is underway—Small Language Models (SLMs). SLMs are emerging as the right-sized AI for real-world applications where efficiency, privacy, and cost matter as much as intelligence.

GEN AI & APPLIED RESEARCH

1/11/20262 min read

What Is a Small Language Model (SLM)?

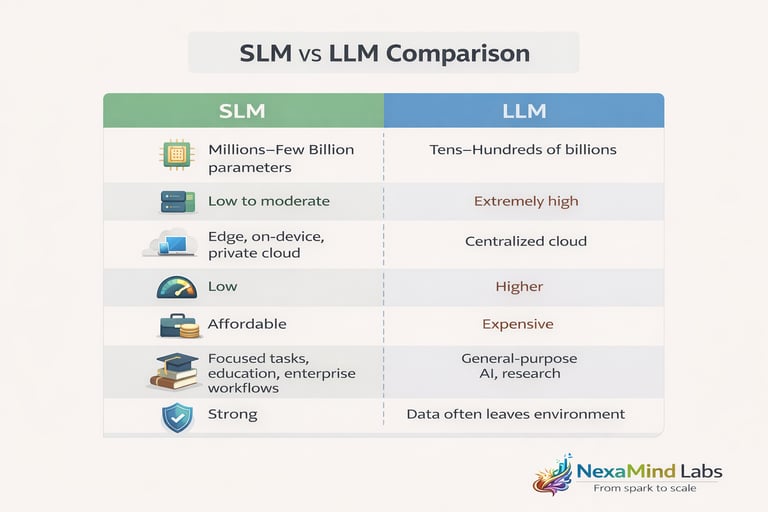

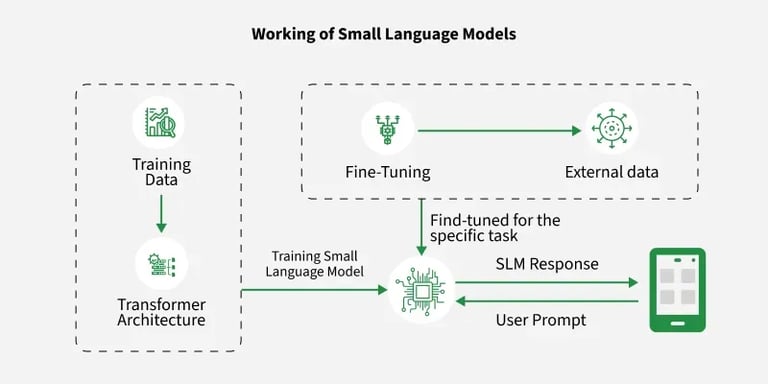

A Small Language Model (SLM) is a language model designed with significantly fewer parameters than an LLM, typically ranging from millions to a few billion parameters, compared to tens or hundreds of billions in LLMs.

Rather than trying to “know everything,” SLMs are:

Task-focused

Domain-optimized

Resource-efficient

They are often trained or fine-tuned for specific use cases such as customer support, document summarization, educational tutoring, or on-device AI.

Why SLMs Are Gaining Momentum

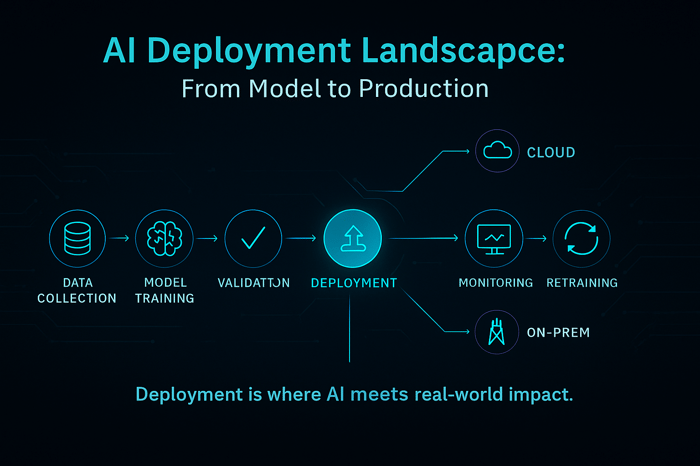

From an architectural standpoint, SLMs solve several practical deployment challenges that LLMs struggle with.

Key Advantages of SLMs

1. Lower Infrastructure Cost

SLMs require dramatically less compute, memory, and energy—making them cost-effective for startups and enterprises alike.

2. Faster Inference

With fewer parameters, SLMs respond faster, enabling real-time use cases like chatbots, classroom tools, and mobile apps.

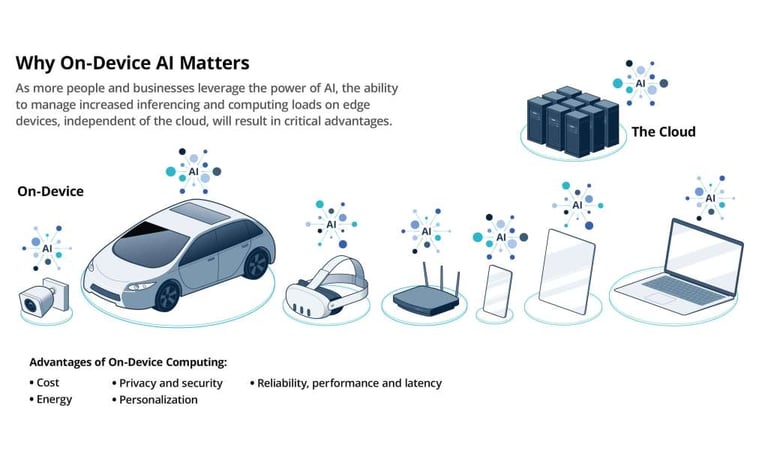

3. Edge & On-Device Deployment

SLMs can run on laptops, mobile devices, and IoT hardware—critical for offline and low-latency environments.

4. Better Data Privacy

Sensitive data never needs to leave the device or private network, reducing compliance and security risks.

5. Easier Fine-Tuning

SLMs can be customized with smaller datasets, making them ideal for domain-specific intelligence.

Real-World Use Cases of SLMs

Educational platforms – curriculum-aligned tutors and learning assistants

Enterprise automation – internal knowledge bots and document processing

Healthcare & finance – privacy-sensitive NLP workflows

Mobile apps – AI features without cloud dependency

Edge AI systems – factories, retail, smart devices

SLMs are particularly powerful when paired with retrieval systems (RAG) or rule-based logic, delivering precision without bloat.

When Should You Choose an SLM?

As a technical architect, the decision is simple:

Choose an SLM when:

Your problem domain is well-defined

Latency and cost matter

Data privacy is critical

You need scalability across devices

Choose an LLM when:

You need broad reasoning across unknown domains

Creativity and generalization outweigh efficiency

Final Thoughts: Right-Sized AI Is the Future

The AI ecosystem is maturing. We are moving from “largest possible model” thinking to “most appropriate model” design.

SLMs represent a strategic shift—AI that is:

Purpose-built

Economically sustainable

Architecturally elegant

In many real-world systems, SLMs aren’t a compromise—they’re an upgrade.